This article aims to provide readers with a comprehensive understanding of the Time of Flight (TOF) system, progressing from basic concepts to more advanced knowledge. The content includes an overview of TOF systems; detailed introductions to indirect TOF (iTOF) and direct TOF (dTOF), discussing system parameters, strengths and weaknesses, algorithms, etc.; and an exploration of various components within TOF systems, such as VCSELs, transmitting and receiving lenses, receiving sensors like CIS/APD/SPAD/SiPM, and driver circuits like ASIC.

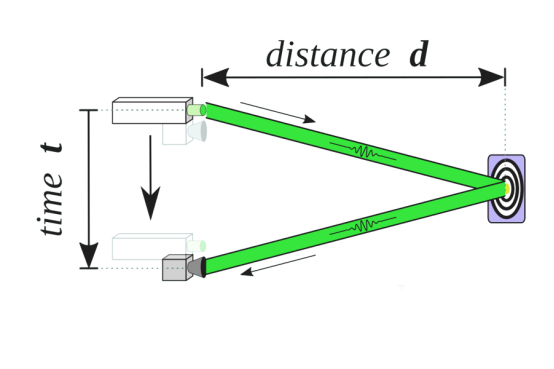

TOF, short for Time of Flight, refers to the method of measuring distance by calculating the time light takes to travel a certain distance in a medium. Predominantly applied in optical TOF scenarios, its principle is relatively straightforward. As illustrated below, a light source emits a beam of light, marking the time of emission. This light reflects off a target and is captured by a receiver, noting the time of reception. The difference in these times, denoted as t, gives the distance

TOF finds applications in a myriad of fields. In consumer electronics, it's used for facial recognition, camera autofocus, proximity sensors, motion interaction, gesture recognition, AR, etc.; for robotics like vacuum robots and drones for obstacle avoidance, and for 3D scene scanning. In the industrial and security sectors, it supports automated robots, people counting, smart parking systems, intelligent transportation, smart warehousing, and dimension measurements. While LiDAR technology in smart driving is indeed a part of the TOF (Time of Flight) category, this particular series does not focus on it. However, if you're interested in exploring which LiDAR is mostly applied within the realm of TOF, please click here for more detailed insights.

Application Field

LiDAR laser source used in TOF Detection Field

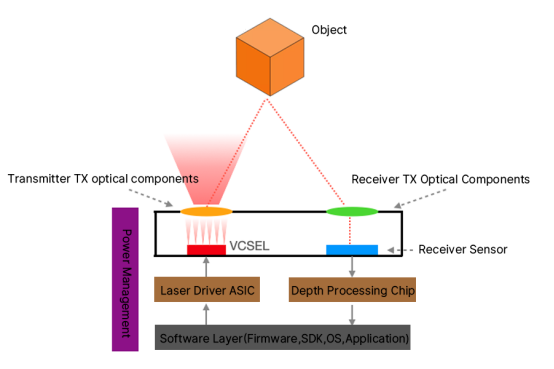

To achieve the distance measurement as described, a typical TOF system comprises:

This includes a laser light source, primarily a VCSEL; a driver circuit ASIC to drive the laser; and optical components for beam control like collimating lenses or diffractive optical elements, and filters.

This encompasses lenses and filters at the receiving end; sensors such as CIS, SPAD, or SiPM depending on the TOF system; and an Image Signal Processor (ISP) for processing massive amounts of data from the receiver chip.

Stable current control for VCSELs, high voltage for SPADs, etc., all necessitate robust power management.

Including firmware, SDK, OS, and application layer.

The architecture demonstrates how a laser beam, originating from the VCSEL and modified by optical components, travels through space, reflects off an object, and returns to the receiver. Calculating the time lapse in this process reveals distance or depth information. It's noteworthy that this architecture doesn’t cover the noise paths, like sunlight-induced noise or multi-path noise from reflections, which we will discuss later.

TOF systems are primarily categorized by their distance measurement techniques: direct TOF (dTOF) and indirect TOF (iTOF), each with distinct hardware and algorithmic approaches. We'll initially outline their principles before delving into a comparative analysis of their advantages, challenges, and system parameters.

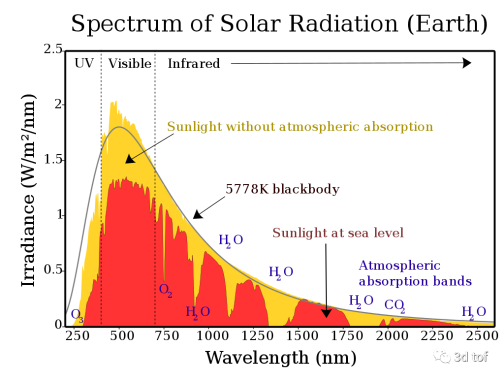

Despite TOF's seemingly simple principle — emitting a light pulse and detecting its return to calculate distance — the complexity lies in differentiating the returning light from ambient light. This challenge is addressed by emitting sufficiently bright light to achieve a high signal-to-noise ratio and selecting appropriate wavelengths to minimize environmental light interference. Another approach involves encoding the emitted light to make it distinguishable upon return, akin to SOS signals with a flashlight.

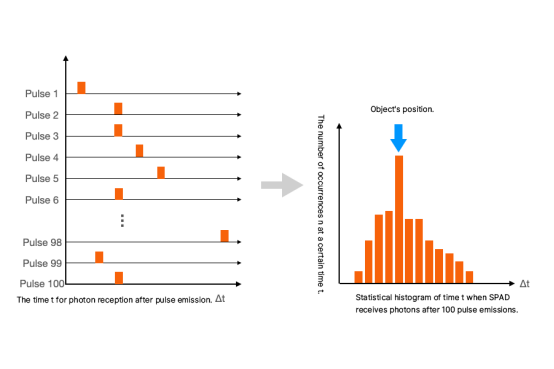

Direct TOF directly measures the photon's flight time. Its key component, the Single Photon Avalanche Diode (SPAD), is sensitive enough to detect single photons. dTOF employs Time Correlated Single Photon Counting (TCSPC) to measure the time of photon arrivals, constructing a histogram to deduce the most likely distance based on the highest frequency of a particular time difference.

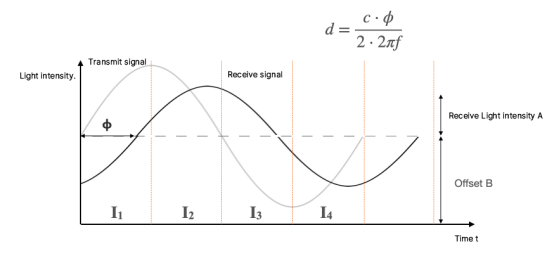

Indirect TOF calculates flight time based on the phase difference between emitted and received waveforms, commonly using continuous wave or pulse modulation signals. iTOF can use standard image sensor architectures, measuring light intensity over time.

iTOF is further subdivided into continuous wave modulation (CW-iTOF) and pulse modulation (Pulsed-iTOF). CW-iTOF measures the phase shift between emitted and received sinusoidal waves, while Pulsed-iTOF calculates phase shift using square wave signals.

Both dTOF and iTOF have their unique advantages and limitations. A detailed comparison from a system parameter perspective will follow in later sections.

TOF systems can also be categorized based on the complexity of information they provide: 1D TOF, 2D TOF, and 3D TOF. 1D TOF represents simple single-point distance measurement, 2D TOF, commonly seen in vacuum robots, scans a line to map distances in a room's lower space, and 3D TOF combines a 2D sensor array with imaging lenses to produce three-dimensional spatial information.

This series will further explore these aspects, providing a deeper dive into TOF technologies and their applications.

Wikipedia. (n.d.). Time of flight. Retrieved from https://en.wikipedia.org/wiki/Time_of_flight

Sony Semiconductor Solutions Group. (n.d.). ToF (Time of Flight) | Common Technology of Image Sensors. Retrieved from https://www.sony-semicon.com/en/technologies/tof

Microsoft. (2021, February 4). Intro to Microsoft Time Of Flight (ToF) - Azure Depth Platform. Retrieved from https://devblogs.microsoft.com/azure-depth-platform/intro-to-microsoft-time-of-flight-tof

ESCATEC. (2023, March 2). Time of Flight (TOF) Sensors: An In-Depth Overview and Applications. Retrieved from https://www.escatec.com/news/time-of-flight-tof-sensors-an-in-depth-overview-and-applications

From the web page https://faster-than-light.net/TOFSystem_C1/

by the author : Chao Guang

Disclaimer:

We hereby declare that some of the images displayed on our website are collected from the Internet and Wikipedia, with the aim of promoting education and information sharing. We respect the intellectual property rights of all creators. The use of these images is not intended for commercial gain.

If you believe that any of the content used violates your copyright, please contact us. We are more than willing to take appropriate measures, including removing images or providing proper attribution, to ensure compliance with intellectual property laws and regulations. Our goal is to maintain a platform that is rich in content, fair, and respects the intellectual property rights of others.

Please contact us at the following email address: sales@lumispot.cn. We commit to taking immediate action upon receiving any notification and guarantee 100% cooperation in resolving any such issues.

Contact: Lumispot

Phone:

Tel: +86-510-87381808

Email: sales@lumispot.cn

Add: Bldg 4 No.99 Fu Rong 3rd Road, Wuxi, China